AI Megathread

-

@Faraday said in AI Megathread:

@bored said in AI Megathread:

Thrash and struggle as we may, but this is the world we live in now.

Only if we accept it.

The actors and writers in Hollywood are striking because of it.

Lots of folks in the writing community are boycotting AI-generated covers or ChatGPT-generated text, and there’s backlash against those who use them.

Lawsuits are striking back against the copyright infringement.

Again, to be clear, I’m not against the underlying technology, only the unethical use of it.

If a specific author or artist wants to train a model on their own stuff and then use it to generate more stuff like their own? More power to them. (It won’t work as well, because the real horsepower comes from the sheer volume of trained work, but that’s a separate issue.)

If MJ were only trained on a database of work from artists who had opted in and were paid royalties for every image generated? (like @Testament mentioned for Shutterstock - 123rf and Adobe have similar systems) That’s fine too (assuming the royalty arrangements are decent - look to Spotify for the dangers there).

These tools are products, and consumers have an influence in whether those products are commercially successful.

Right now at work I’m having a horrendous time dealing with upper management who are just uncritical (and frankly, experienced enough to know better) about this sort of thing.

“ChatGPT told <Team Member x> to do thing Y with Python! It even wrote them the script!”

Me: Uhh, do they have any idea how this works? Or how to even read the script?

Dead silence.

I’m not letting this go on in my org without a fight.

-

@bored said in AI Megathread:

@Faraday There will be a legal shake-out, for sure. I’m not optimistic that starving artists (a group not traditionally known for their ability to afford expensive lobbyists) are going to win, though. While Midjourney is a bit of a black box (given their for-profit model), the idea of figuring out valid royalties for Stable Diffusion’s training data is getting into counting grains of sand on the beach territory. Given the open-source nature and proliferation of descendant models… what can you do? The only answer is to ban the technology completely outside of… approved, licensed models, which would almost certainly just be MORE of a corporate coup as ownership of those big data sets will become prized.

I brought this one up because I think the Hasbro stuff is an interesting case of it being mainstreamed and placed into the professional space (And its RPGs. Exactly what we discuss here.) Notably, the book has artist credits! These are a bunch of regular WotC contributors, and the art is in familiar styles (although also, the artist whose work it most looks like is not in the credits). Something got arranged here. Some people got paid. But maybe not all the people. WoTC presumably owns the rights to every bit of D&D and (vastly more) MTG art. That is a big enough set to train on. They’re probably not paying most of those people.

There will be laws for all this stuff eventually, but the idea that the creative industries come out on top seems very slim. Copywriting is already essentially being annihilated as a profession. I don’t see how it goes any other way.

Y’all ever think all of this shit is just incompatible with capitalism?

Or rather, ever think that capitalism is just incompatible with life at this point?

I’ll cop to oversimplifying things and thumping Marx and Marcuse here, but IMO the quiet part hasn’t been said loud enough in all of this mess.

-

@bored said in AI Megathread:

Are we fans of the DMCA now?

There’s certainly room to improve copyright laws, but am I a fan of the broad principles behind it? Of protecting creators’ work? Absolutely.

Creators are going nowhere because large language models and stable diffusion type graphic models are dumb.

I don’t just mean they’re dumb on principle, I mean they’re dumb logically and artistically. They’re parrots who don’t understand the world they’re parroting. They don’t understand jack about the human experience.

They can never write a news article about an event that just happened - not until some human writes it first so they can copy it. They can’t write a biography about a person who hasn’t been born yet, or generate an image of Ford’s new automobile, or write late-night jokes about the day’s events - not until a human has done something for them to copy. They will not - and can not - ever generate something truly original, inspired, or new.

Now to be fair, a lot of human creations are unoriginal and uninspired too, but we at least have the potential to do better. Generative AI doesn’t.

And this isn’t just a bug that they can fix in version 2.0. It’s baked into the very core of how they work.

JMS had a great essay on this.

I also liked Adam Conover’s video essay.

I don’t mean to downplay the harm that generative AI tools can cause to creatives. But this tech is nowhere near as transformative as the tech hype would like us to believe.

-

@SpaceKhomeini said in AI Megathread:

Y’all ever think all of this shit is just incompatible with capitalism?

The unrestrained drive for ever increasing profit will result in reform, revolution, or societal collapse. So yes.

You could probably keep capitalism going indefinitely even with shit like this if you implemented a strong safety program, but the plutocrats are too stupid even to do that. So!

-

I think we’re just going to see writing and graphical art go the same way as physical art that can be mass-produced. I can get an imitation porcelain vase mass produced out of a factory for $10 or I can get a handmade artisan-crafted porcelain vase for $1000. They may seem alike but a critical eye can spot the difference and some people may care about that.

-

@SpaceKhomeini said in AI Megathread:

@Faraday said in AI Megathread:

@bored said in AI Megathread:

Thrash and struggle as we may, but this is the world we live in now.

Only if we accept it.

The actors and writers in Hollywood are striking because of it.

Lots of folks in the writing community are boycotting AI-generated covers or ChatGPT-generated text, and there’s backlash against those who use them.

Lawsuits are striking back against the copyright infringement.

Again, to be clear, I’m not against the underlying technology, only the unethical use of it.

If a specific author or artist wants to train a model on their own stuff and then use it to generate more stuff like their own? More power to them. (It won’t work as well, because the real horsepower comes from the sheer volume of trained work, but that’s a separate issue.)

If MJ were only trained on a database of work from artists who had opted in and were paid royalties for every image generated? (like @Testament mentioned for Shutterstock - 123rf and Adobe have similar systems) That’s fine too (assuming the royalty arrangements are decent - look to Spotify for the dangers there).

These tools are products, and consumers have an influence in whether those products are commercially successful.

Right now at work I’m having a horrendous time dealing with upper management who are just uncritical (and frankly, experienced enough to know better) about this sort of thing.

“ChatGPT told <Team Member x> to do thing Y with Python! It even wrote them the script!”

Me: Uhh, do they have any idea how this works? Or how to even read the script?

Dead silence.

I’m not letting this go on in my org without a fight.

Please tell me you’re documenting this, because this is a security breach waiting to happen, or other litigation.

-

@dvoraen said in AI Megathread:

@SpaceKhomeini said in AI Megathread:

@Faraday said in AI Megathread:

@bored said in AI Megathread:

Thrash and struggle as we may, but this is the world we live in now.

Only if we accept it.

The actors and writers in Hollywood are striking because of it.

Lots of folks in the writing community are boycotting AI-generated covers or ChatGPT-generated text, and there’s backlash against those who use them.

Lawsuits are striking back against the copyright infringement.

Again, to be clear, I’m not against the underlying technology, only the unethical use of it.

If a specific author or artist wants to train a model on their own stuff and then use it to generate more stuff like their own? More power to them. (It won’t work as well, because the real horsepower comes from the sheer volume of trained work, but that’s a separate issue.)

If MJ were only trained on a database of work from artists who had opted in and were paid royalties for every image generated? (like @Testament mentioned for Shutterstock - 123rf and Adobe have similar systems) That’s fine too (assuming the royalty arrangements are decent - look to Spotify for the dangers there).

These tools are products, and consumers have an influence in whether those products are commercially successful.

Right now at work I’m having a horrendous time dealing with upper management who are just uncritical (and frankly, experienced enough to know better) about this sort of thing.

“ChatGPT told <Team Member x> to do thing Y with Python! It even wrote them the script!”

Me: Uhh, do they have any idea how this works? Or how to even read the script?

Dead silence.

I’m not letting this go on in my org without a fight.

Please tell me you’re documenting this, because this is a security breach waiting to happen, or other litigation.

At work we were recently talking about AI Hallucination Exploits. Essentially, sometimes AI-generated code calls imports for nonexistent libraries. Attackers can upload so-named libraries to public repositories, full of exploits. Hilarity and bottom-line-affecting events ensue.

-

@shit-piss-love said in AI Megathread:

I think we’re just going to see writing and graphical art go the same way as physical art that can be mass-produced. I can get an imitation porcelain vase mass produced out of a factory for $10 or I can get a handmade artisan-crafted porcelain vase for $1000. They may seem alike but a critical eye can spot the difference and some people may care about that.

As I just mentioned off of this thread, I’m from a family of musicians (only ever a hobbyist myself, but still). The idea that you can make close to a living as one is rather laughable at this point. The apocalypse in this field already happened long before The Zuck opened up his magic generative AI music box.

A myriad of factors already killed your average working musician’s ability to make a living (you’re probably not going to be Bjork, and the days of well-compensated session musicians are kind of gone).

And just like Hollywood, the music industry is already figuring out how to use AI so they never have to sign or employ anyone ever again. But even without AI, the vast majority of commercially-viable pop music being written by the same couple of middle-aged Swedish dudes sort of had the same effect.

For sure, generative AI as a replacement for real artistry is a grotesque insult, but until we start deciding that artists and people in general should be allocated resources to have their needs met regardless of the commercial viability and distribution of their product, we’re just going to be kicking this can down the road.

-

@dvoraen said in AI Megathread:

@SpaceKhomeini said in AI Megathread:

@Faraday said in AI Megathread:

@bored said in AI Megathread:

Thrash and struggle as we may, but this is the world we live in now.

Only if we accept it.

The actors and writers in Hollywood are striking because of it.

Lots of folks in the writing community are boycotting AI-generated covers or ChatGPT-generated text, and there’s backlash against those who use them.

Lawsuits are striking back against the copyright infringement.

Again, to be clear, I’m not against the underlying technology, only the unethical use of it.

If a specific author or artist wants to train a model on their own stuff and then use it to generate more stuff like their own? More power to them. (It won’t work as well, because the real horsepower comes from the sheer volume of trained work, but that’s a separate issue.)

If MJ were only trained on a database of work from artists who had opted in and were paid royalties for every image generated? (like @Testament mentioned for Shutterstock - 123rf and Adobe have similar systems) That’s fine too (assuming the royalty arrangements are decent - look to Spotify for the dangers there).

These tools are products, and consumers have an influence in whether those products are commercially successful.

Right now at work I’m having a horrendous time dealing with upper management who are just uncritical (and frankly, experienced enough to know better) about this sort of thing.

“ChatGPT told <Team Member x> to do thing Y with Python! It even wrote them the script!”

Me: Uhh, do they have any idea how this works? Or how to even read the script?

Dead silence.

I’m not letting this go on in my org without a fight.

Please tell me you’re documenting this, because this is a security breach waiting to happen, or other litigation.

Here’s the fun part where I tell you that one of the clueless people involved has a cybersec job title.

-

-

@SpaceKhomeini said in AI Megathread:

For sure, generative AI as a replacement for real artistry is a grotesque insult, but until we start deciding that artists and people in general should be allocated resources to have their needs met regardless of the commercial viability and distribution of their product, we’re just going to be kicking this can down the road.

Absolutely. I’m in no way endorsing the destructive effects AI is going to have. I just don’t think you can put the genie back in the bottle with technology. There doesn’t seem to be a good way out with our economic model. We could be living in a world filled with so much more beauty and culture.

-

@Rinel They don’t have to steal data. They can just… get people to consent to scans (which will be far more exhaustive and better for training than random scraping). Maybe even pay them a pittance!

The point was that they have the resources to do it, and are simultaneously one of the major movers behind the technology. The idea that the data necessary to generate convincing people is hard to acquire is just… it’s not The movie industry ‘scan extras’ thing was being done for other reasons, not because it’s hard to find humans. I would assume they probably already have it available; Google’s AI models are not based on the broad, wild-west scraping that Stable Diffusion is.

@SpaceKhomeini Yes. If people are reading this as… pro big business, I have no idea how.

@Faraday said in AI Megathread:

@bored said in AI Megathread:

Are we fans of the DMCA now?

There’s certainly room to improve copyright laws, but am I a fan of the broad principles behind it? Of protecting creators’ work? Absolutely.

Principles are great. But what actually happens when the government intervenes to protect stakeholders from technology? At no point is the answer ‘the little guy profits.’ (See, for instance, DMCAs against youtubers).

I don’t just mean they’re dumb on principle, I mean they’re dumb logically and artistically. They’re parrots who don’t understand the world they’re parroting. They don’t understand jack about the human experience.

Be that as it may, artists are clearly threatened by them. And I can see why.

Working with a home install on consumer-grade gaming hardware, I can with a bit of learning and small amount of effort and generate images that are arguably more aesthetically pleasing than most amateur humans, in what is probably a hundredth of the time, with much closer control than I’d get trying to communicate needs to an artist and going through a revision process. For me, this is just fun to play around with, yet I see how that could be immensely valuable to a lot of people. AI can’t replace top 20% human creative talent, maybe, but I think it will obliterate vast midrange of it. I started this tangent with the largest company in our hobby selling a 60 dollar hardcover book with AI art. That’s where we’re at. I thought it was interesting, anyway.

-

BTW, Latest main branch Evennia now supports adding NPCs backed by an LLM chat model.

https://www.evennia.com/docs/latest/Contribs/Contrib-Llm.html

-

@Griatch Oh man, I want to see that implemented! Are any games using it? Was Jumpscare doing something with that?

-

@Tez said in AI Megathread:

@Griatch Oh man, I want to see that implemented! Are any games using it? Was Jumpscare doing something with that?

It’s either that or she created some really great NPC dialogue on a trigger. Silent Heaven is one of the most technically impressive RPI games out there, in my opinion.

-

@somasatori I can’t speak for Jumpscare, but I’m pretty sure no game is using this yet. It’s in main branch since two weeks or so.

-

@somasatori said in AI Megathread:

@Tez said in AI Megathread:

@Griatch Oh man, I want to see that implemented! Are any games using it? Was Jumpscare doing something with that?

It’s either that or she created some really great NPC dialogue on a trigger. Silent Heaven is one of the most technically impressive RPI games out there, in my opinion.

Thanks! I didn’t use a LLM for anything. I wrote all the automated responses.

-

-

@bored said in AI Megathread:

Working with a home install on consumer-grade gaming hardware, I can with a bit of learning and small amount of effort and generate images that are arguably more aesthetically pleasing than most amateur humans, in what is probably a hundredth of the time, with much closer control than I’d get trying to communicate needs to an artist and going through a revision process.

I’ve been using Midjourney for quite some time now, and I can say with an extreme amount of confidence that this is not only blatantly incorrect as regards any art commissioned from an actual artist, but is actually incorrect as regards any art that displays any degree of complexity in what it depicts, even when done in comparison to a person who cannot draw.

You cannot make Midjourney (or Stable Diffusion, or any other model currently available to the public) generate an image of Pikachu, wearing a crown and royal cape, in a lightsaber duel on the moon with an angry duck. I can draw that in MS Paint, and people will know what is happening in the image, even though I cannot draw.

LLMs can’t even replace 95% of human talent–they can provide crude emulations that greedy corporations and some people with absurdly low standards will accept on the basis that it’s free. If you aren’t generating static portraits, basic landscapes, or the most cliched interactions, LLM image generators are, at best, a way to jumpstart an artist’s imagination.

A much more interesting use of LLMs is to abandon representational goals of art entirely and embrace the weird divinatory aspects of throwing abstraction into a[n ethically created] LLM black box and seeing what it creates from it. Cut down the arborescent models; embrace rhizomatic image generation. This is the only way that LLMs can be a part of actual art–the art will be in the process of human-mystery interaction, not in the end result.

-

@bored said in AI Megathread:

The official RPGs are doing it, so why shouldn’t you?! Hasbro has announced AI DMs as an upcoming feature for D&D Beyond, and they were just caught using AI generated art in their newest upcoming/just releasing book, with some pretty blatantly poor looking results.

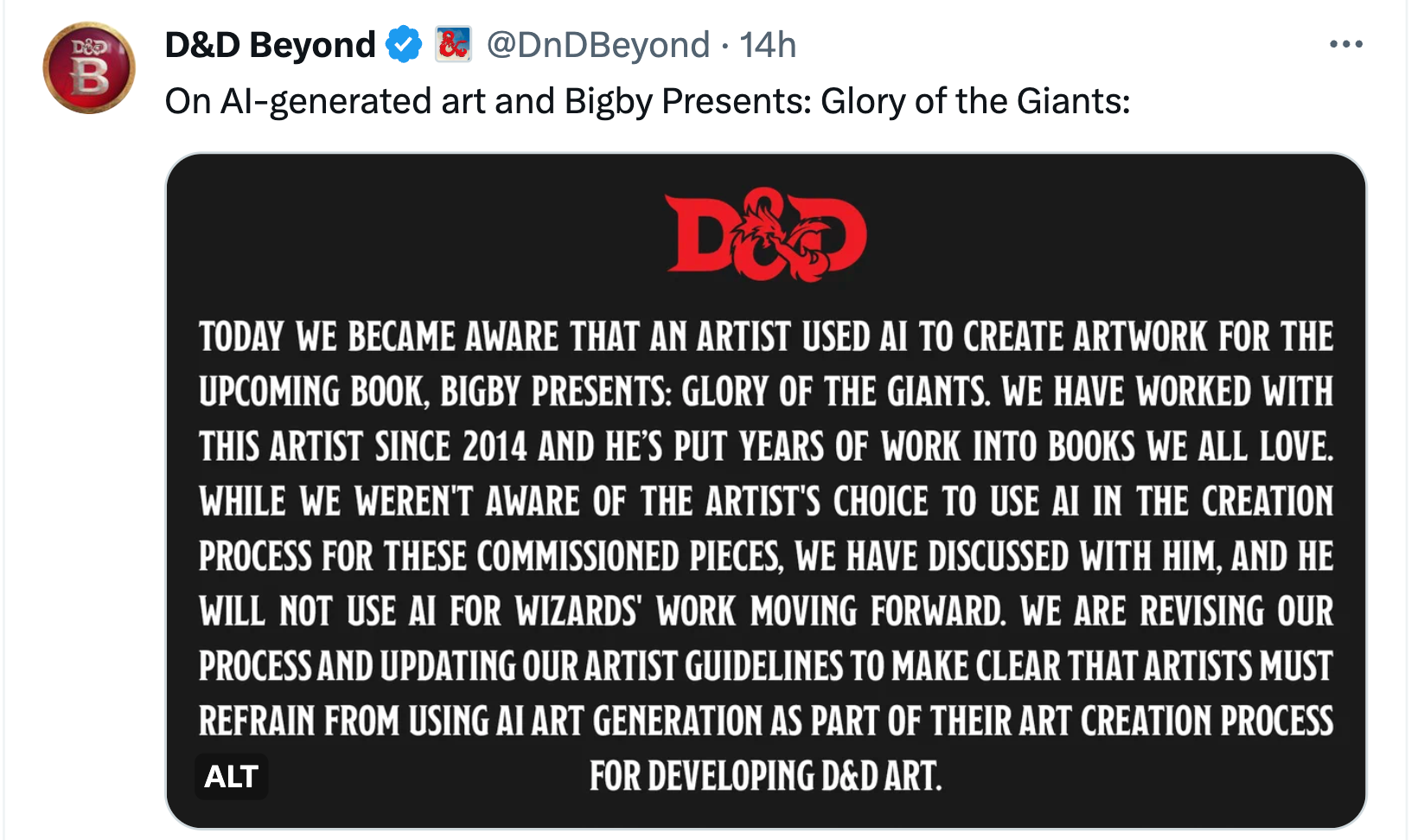

I’m not sure if this is the sourcebook you were referring to, but either way it seems relevant to the discussion. As more and more publishers face backlash for using AI art, some are doubling down but others are at least doing the right thing. It’s not inevitable that this hype-induced trend will continue without consequence.

“We are revising our process and updating our artist guidelines to make clear that artists must refrain from using AI art generation as part of their art creation process for developing D&D art."

One other thing that I think will factor into all this - the US copyright office has ruled that AI-generated stuff can’t be copyrighted. So that means if someone does publish a book with an AI-generated cover image, they’d have no rights to it. Because anyone using the same prompt/seed could generate that exact same image in the AI tools and use it too.